Basics of NLP : Zero to One

TABLE OF CONTENTS

Share on Social Media

Related Blogs

What are SQL Server Functions?

Read More: What are SQL Server Functions?

How to create and deploy Python API in Docker?

Read More: How to create and deploy Python API in Docker?

Overview of MySQL Analytical Functions

Read More: Overview of MySQL Analytical Functions

How to implement ABCDE method for time management

Read More: How to implement ABCDE method for time management

Natural Language Processing (NLP) is a field of artificial intelligence that focuses on the interaction between computers and humans through natural language. It involves the analysis, understanding, and generation of human language. In today’s digital world, NLP plays a crucial role in various applications such as chatbots, sentiment analysis, language translation, and more.

Overview of NLP

NLP consists of systematic processes for analyzing, understanding and deriving the information from the text data. It involves tasks like part-of-speech tagging, parsing, and named entity recognition.

With the exponential growth of digital data, NLP has become essential for extracting insights, sentiment analysis, and creating personalized user experiences. It powers search engines, virtual assistants, social media analytics, and much more.

Getting Started with Python for NLP

Python is a versatile and beginner-friendly programming language that is widely used in NLP due to its simplicity and robust libraries.

Setting up the Python Environment for NLP

To start with NLP in Python, you need to set up the environment by installing Python and relevant libraries like NLTK, spaCy, and gensim.

Basic Python Libraries for NLP Tasks

Libraries like NLTK (Natural Language Toolkit) and spaCy provide tools and resources for tasks such as tokenization, part-of-speech tagging, and named entity recognition.

Text Preprocessing

Before analyzing text data in NLP, it is essential to preprocess it to clean and organize the text for further analysis.

Tokenization: Breaking Text into Individual Words or Sentences

Tokenization involves breaking a text into tokens, which can be words, characters, or subwords. This step is crucial for further text analysis tasks.

In the simplest form, tokenization can be achieved by splitting text using whitespace. NLTK provides a function called word_tokenize() for splitting strings into tokens and for sentences, a function called sent_tokenize() for splitting the text into sentences. We call it sentence tokenization.

Input Code:

import nltk

nltk.download('all')

from nltk import word_tokenize, sent_tokenize

sent = "Tokenization is the first step in any NLP pipeline. \

It has an important effect on the rest of your pipeline."

print(word_tokenize(sent))

print(sent_tokenize(sent))Output:

[‘Tokenization’, ‘is’, ‘the’, ‘first’, ‘step’, ‘in’, ‘any’, ‘NLP’, ‘pipeline’, ‘.’, ‘It’, ‘has’, ‘an’, ‘important’, ‘effect’, ‘on’, ‘the’, ‘rest’, ‘of’, ‘your’, ‘pipeline’, ‘.’] [‘Tokenization is the first step in any NLP pipeline.’, ‘It has an important effect on the rest of your pipeline.’]

Stopword Removal: Filtering Out Common Words

Stopwords are common words like ‘and’, ‘the’, ‘is’ that add little value in NLP tasks. Removing these words helps in focusing on the meaningful content.

NLTK library consists of a list of words that are considered stopwords for the English language. Some of them are : [i, me, my, myself, we, our, ours, ourselves, you, you’re, you’ve, you’ll, you’d, your, yours, yourself, yourselves, he, most, other, some, such, no, nor, not, only, own, same, so, then, too, very, s, t, can, will, just, don, don’t, should, should’ve, now, d, ll, m, o, re, ve, y, ain, aren’t, could, couldn’t, didn’t, didn’t].If we want to add any new word to a set of words then it is easy using the set method.

To check the list of stopwords you can type the following commands:

Input Code:

import nltk

from nltk.corpus import stopwords

nltk.download('stopwords')

print(stopwords.words('english'))Output:

[‘i’, ‘me’, ‘my’, ‘myself’, ‘we’, ‘our’, ‘ours’, ‘ourselves’, ‘you’, “you’re”, “you’ve”, “you’ll”, “you’d”, ‘your’, ‘yours’, ‘yourself’, ‘yourselves’, ‘he’, ‘him’, ‘his’, ‘himself’, ‘she’, “she’s”, ‘her’, ‘hers’, ‘herself’, ‘it’, “it’s”, ‘its’, ‘itself’, ‘they’, ‘them’, ‘their’, ‘theirs’, ‘themselves’, ‘what’, ‘which’, ‘who’, ‘whom’, ‘this’, ‘that’, “that’ll”, ‘these’, ‘those’, ‘am’, ‘is’, ‘are’, ‘was’, ‘were’, ‘be’, ‘been’, ‘being’, ‘have’, ‘has’, ‘had’, ‘having’, ‘do’, ‘does’, ‘did’, ‘doing’, ‘a’, ‘an’, ‘the’, ‘and’, ‘but’, ‘if’, ‘or’, ‘because’, ‘as’, ‘until’, ‘while’, ‘of’, ‘at’, ‘by’, ‘for’, ‘with’, ‘about’, ‘against’, ‘between’, ‘into’, ‘through’, ‘during’, ‘before’, ‘after’, ‘above’, ‘below’, ‘to’, ‘from’, ‘up’, ‘down’, ‘in’, ‘out’, ‘on’, ‘off’, ‘over’, ‘under’, ‘again’, ‘further’, ‘then’, ‘once’, ‘here’, ‘there’, ‘when’, ‘where’, ‘why’, ‘how’, ‘all’, ‘any’, ‘both’, ‘each’, ‘few’, ‘more’, ‘most’, ‘other’, ‘some’, ‘such’, ‘no’, ‘nor’, ‘not’, ‘only’, ‘own’, ‘same’, ‘so’, ‘than’, ‘too’, ‘very’, ‘s’, ‘t’, ‘can’, ‘will’, ‘just’, ‘don’, “don’t”, ‘should’, “should’ve”, ‘now’, ‘d’, ‘ll’, ‘m’, ‘o’, ‘re’, ‘ve’, ‘y’, ‘ain’, ‘aren’, “aren’t”, ‘couldn’, “couldn’t”, ‘didn’, “didn’t”, ‘doesn’, “doesn’t”, ‘hadn’, “hadn’t”, ‘hasn’, “hasn’t”, ‘haven’, “haven’t”, ‘isn’, “isn’t”, ‘ma’, ‘mightn’, “mightn’t”, ‘mustn’, “mustn’t”, ‘needn’, “needn’t”, ‘shan’, “shan’t”, ‘shouldn’, “shouldn’t”, ‘wasn’, “wasn’t”, ‘weren’, “weren’t”, ‘won’, “won’t”, ‘wouldn’, “wouldn’t”]

The following example demonstrates how to filter out stop words from a sample text:

Input Code:

from nltk.corpus import stopwords

from nltk.tokenize import word_tokenize

# Sample text

text = "This is an example sentence showcasing the removal of stop words."

# Tokenize the text

words = word_tokenize(text)

# Filter out stop words

filtered_words = [word for word in words if word.lower() not in stopwords.words('english')]

print("Original Text:", words)

print("Text after Removing Stopwords:", filtered_words)Output:

Original Text: [‘This’, ‘is’, ‘an’, ‘example’, ‘sentence’, ‘showcasing’, ‘the’, ‘removal’, ‘of’, ‘stop’, ‘words’, ‘.’]

Text after Removing Stopwords: [‘example’, ‘sentence’, ‘showcasing’, ‘removal’, ‘stop’, ‘words’, ‘.’]

Additionally, you can create a custom list of stop words if needed. Here’s how you can add your custom stop words to the NLTK set:

Input Code:

custom_stopwords = set(['example', 'showcasing'])

filtered_words_custom = [word for word in words if word.lower() not in custom_stopwords]

print("Text after Removing Custom Stopwords:", filtered_words_custom)Output:

Text after Removing Custom Stopwords: [‘This’, ‘is’, ‘an’, ‘sentence’, ‘the’, ‘removal’, ‘of’, ‘stop’, ‘words’, ‘.’]

Stemming and Lemmatization

Stemming and lemmatization are techniques used to reduce words to their base or root form. This helps in standardizing words for analysis and improving accuracy.Task of reducing each word to its root . For example “Play” is the root for words like “Plays”, “Playing”, “Played”.

Stemming:

Stemming involves removing prefixes or suffixes from words to obtain their root form, known as the stem. It is a simpler and faster process compared to lemmatization, but it may not always result in a real word.

Input Code:

from nltk.stem import PorterStemmer

from nltk.tokenize import word_tokenize

sentence = "Natural language processing is a fascinating field for data scientists."

# Tokenize the sentence

words = word_tokenize(sentence)

# Initialize the Porter Stemmer

stemmer = PorterStemmer()

# Stem each word in the sentence

stemmed_words = [stemmer.stem(word) for word in words]

# Print the original and stemmed words

print("Original words:", words)

print("Stemmed words:", stemmed_words)Output:

Original words: [‘Natural’, ‘language’, ‘processing’, ‘is’, ‘a’, ‘fascinating’, ‘field’, ‘for’, ‘data’, ‘scientists’, ‘.’]

Stemmed words: [‘natur’, ‘languag’, ‘process’, ‘is’, ‘a’, ‘fascin’, ‘field’, ‘for’, ‘data’, ‘scientist’, ‘.’]

Lemmatization:

Lemmatization, on the other hand, aims to reduce words to their base or root form, known as the lemma. Unlike stemming, lemmatization ensures that the resulting word is a valid word in the language.

Input Code:

from nltk.stem import WordNetLemmatizer

from nltk.tokenize import word_tokenize

# Example sentence

sentence = "Natural language processing is a fascinating field for data scientists."

# Tokenize the sentence

words = word_tokenize(sentence)

# Initialize the WordNet Lemmatizer

lemmatizer = WordNetLemmatizer()

# Lemmatize each word in the sentence

lemmatized_words = [lemmatizer.lemmatize(word) for word in words]

# Print the original and lemmatized words

print("Original words:", words)

print("Lemmatized words:", lemmatized_words)Output:

Original words: [‘Natural’, ‘language’, ‘processing’, ‘is’, ‘a’, ‘fascinating’, ‘field’, ‘for’, ‘data’, ‘scientists’, ‘.’]

Lemmatized words: [‘Natural’, ‘language’, ‘processing’, ‘is’, ‘a’, ‘fascinating’, ‘field’, ‘for’, ‘data’, ‘scientist’, ‘.’]

Part-of-Speech Tagging: Identifying Grammatical Parts of Speech

Dive into part-of-speech tagging, a fundamental component that involves categorizing words based on their grammatical roles. Uncover how this process enhances language comprehension.(POS) tagging is a fundamental task in Natural Language Processing (NLP) that involves assigning a part-of-speech label (such as noun, verb, adjective, etc.) to each word in a sentence.

Input Code:

import nltk

from nltk.tokenize import word_tokenize

nltk.download('averaged_perceptron_tagger')

# Example sentence

sentence = "Natural language processing is a fascinating field for data scientists."

# Tokenize the sentence

words = word_tokenize(sentence)

# Perform POS tagging

pos_tags = nltk.pos_tag(words)

# Print the original words and their POS tags

print("Original words:", words)

print("POS tags:", pos_tags)Output:

Original words: [‘Natural’, ‘language’, ‘processing’, ‘is’, ‘a’, ‘fascinating’, ‘field’, ‘for’, ‘data’, ‘scientists’, ‘.’]

POS tags: [(‘Natural’, ‘JJ’), (‘language’, ‘NN’), (‘processing’, ‘NN’), (‘is’, ‘VBZ’), (‘a’, ‘DT’), (‘fascinating’, ‘JJ’), (‘field’, ‘NN’), (‘for’, ‘IN’), (‘data’, ‘NNS’), (‘scientists’, ‘NNS’), (‘.’, ‘.’)]

Building NLP Models

To build NLP models for various tasks like sentiment analysis, named entity recognition, and text classification.

Sentiment Analysis

Sentiment analysis aims to understand the emotions and opinions expressed in textual data. It can be used on social media data, product reviews, and customer feedback.

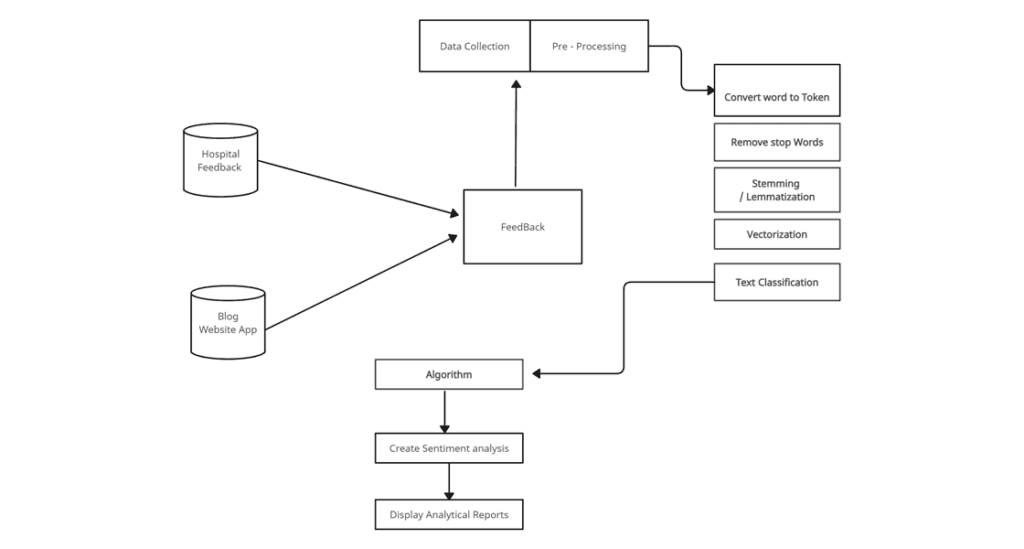

Below is the example flow of real-time sentiment analysis:

Named Entity Recognition

Named Entity Recognition (NER) involves identifying and classifying named entities like names, dates, and locations in text. It is useful for information extraction and categorization.

Text Classification

Text classification involves categorizing text into predefined classes or categories. It is used in spam detection, topic categorization, and sentiment classification.

Advanced NLP Techniques in Python

Advanced NLP techniques that allow for deeper analysis and understanding of text data.

Word Embeddings

Word embeddings represent words as vectors in a multi-dimensional space, capturing semantic relationships between words. They are used in machine learning models for text data.

Topic Modeling

Topic modeling is a technique to discover abstract topics within a collection of text documents. It can be used for content recommendation, trend analysis, and document clustering.

Text Generation

Text generation involves creating new text based on patterns in existing text data. It is used in chatbots, language modeling, and content generation.

Conclusion

This journey through NLP unveils the immense power it holds in reshaping the way we interact with textual data. From fundamental tasks like tokenization and part-of-speech tagging to advanced techniques like sentiment analysis and topic modeling.Dive into the world of NLP, explore its capabilities, and unleash your creativity in text analysis and understanding!

Share on Social Media

Related Blogs

Generative AI and Its Impact: Everything You Need to Know About This Creative Technology

Read More: Generative AI and Its Impact: Everything You Need to Know About This Creative Technology

How to create and deploy Python API in Docker?

Read More: How to create and deploy Python API in Docker?

Node.js Development Services:The Smart Way to Build Scalable Applications

Read More: Node.js Development Services:The Smart Way to Build Scalable Applications

What is distributed Lock Manager in C# and Redis

Read More: What is distributed Lock Manager in C# and RedisStay ahead of the curve

Get the latest insights, tutorials, and industry news delivered straight to your

inbox. Join 10,000+ developers and tech leaders.